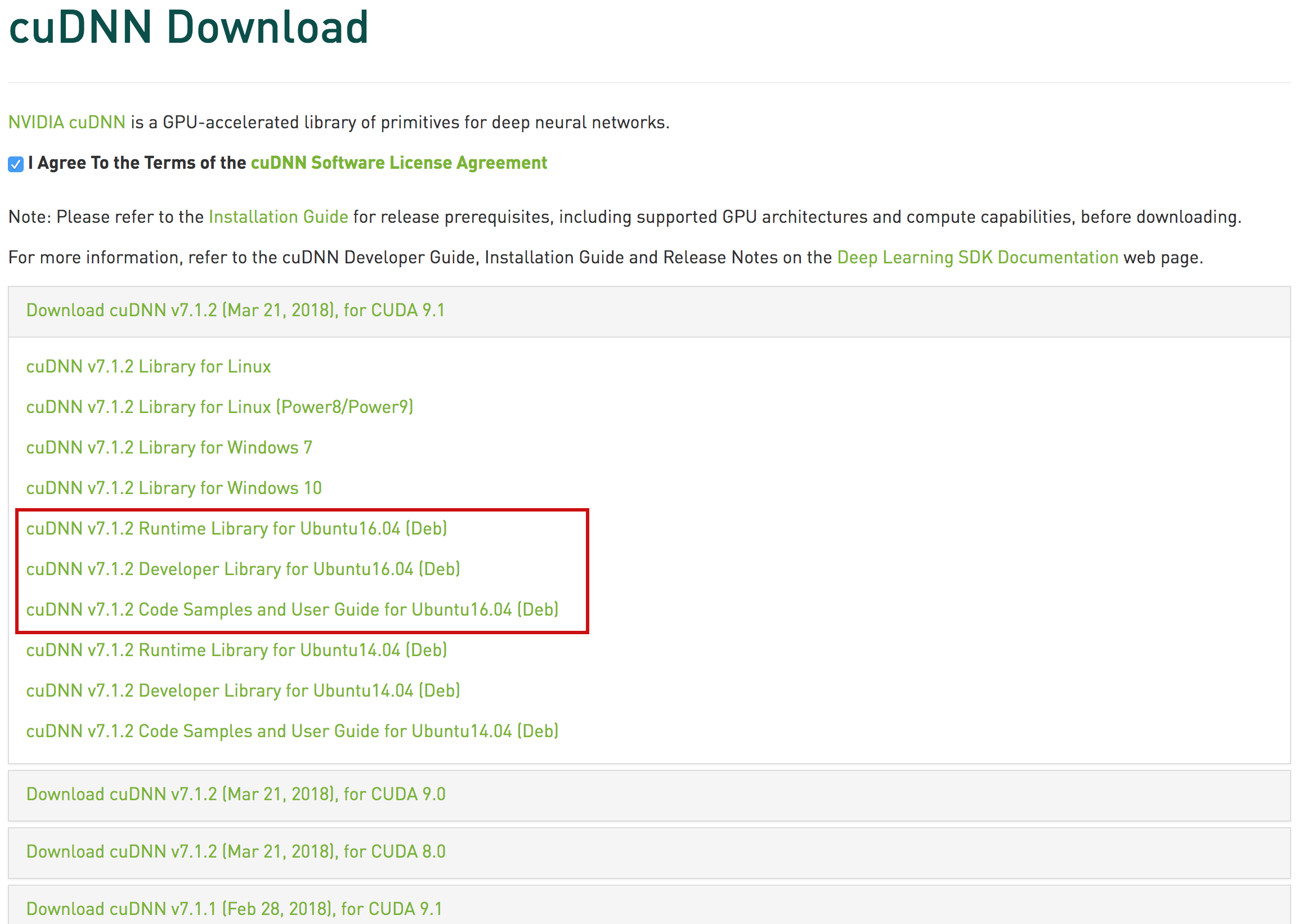

Introduced more specific error codes and error categories ( BAD_PARAM, NOT_SUPPORTED, INTERNAL_ERROR, EXECUTION_FAILED) which helps checking errors in these two levels of granularities. Updated the cuDNN Graph API execution plan serialization JSON schema to version 3. This new feature is only available for NVIDIA Hopper GPUs. Mixed precision is also supported for both input A and B. The fused pointwise operation can be either a scalar, row, column broadcast, or a full tensor pointwise operation. The fusion engine enables pointwise operations in the mainloop to be fused on both input A and B for MatMul. Speed-up of up to 100% over cuDNN 8.9.7 on Ampere GPUs.Įxpanded support of FP16 and BF16 flash attention by adding the gradient for relative positional encoding on NVIDIA Ampere GPUs. Speed-up of up to 50% over cuDNN 8.9.7 on Hopper GPUs. The cuDNN backend API uses less memory for many execution plans which should be beneficial for users who cache execution plans.įP16 and BF16 fused flash attention engine performance has been significantly improved for NVIDIA GPUs: The following features and enhancements have been added to this release: cuDNN 9.0.0 and subsequent releases will work on all current and future GPU architectures subject to specific constraints as documented in the cuDNN Developer Guide. Starting with cuDNN 9.0.0, an important subset of operation graphs are hardware forward compatible. Refer to the cuDNN Installation Guide for more details. This command installs the latest available cuDNN for the latest available CUDA version. Refer to Version Checking Against CUDNN_VERSION in the cuDNN Developer Guide.ĬuDNN now has RPM and Debian meta-packages available for easy installation. The definition of CUDNN_VERSION has been changed to CUDNN_MAJOR * 10000 + CUDNN_MINOR * 100 + CUDNN_PATCHLEVEL from CUDNN_MAJOR * 1000 + CUDNN_MINOR * 100 + CUDNN_PATCHLEVEL.

For more information, refer to the API Overview.įor a list of added, deprecated, and removed APIs, refer to API Changes for cuDNN 9.0.0.ĬuDNN no longer depends on the cuBLAS library instead cuDNN now depends on the cuBLASLt library for certain primitive linear algebra operators. The cuDNN library is reorganized into several sub-libraries, which, in a future cuDNN version, will allow for more flexibility in loading selected parts of the cuDNN library. There are some exciting new features and also some changes that may be disruptive to current applications built against prior versions of cuDNN. This is the first major version bump of cuDNN in almost 4 years. These Release Notes include fixes from the previous cuDNN releases as well as the following additional changes. These are the NVIDIA cuDNN 9.0.0 Release Notes.

For previously released cuDNN documentation, refer to the cuDNN Documentation Archives.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed